It starts with one request

"We need GPUs. We're training a model. Can you provision it this week?"

You've heard this. Maybe last quarter. Maybe last week. You provision a GPU node pool, hand over access, and close the ticket.

Then another team asks. Then the data science org. Then two LLM initiatives from leadership with an exec sponsor.

Suddenly, you're not running apps on Kubernetes anymore. You're running an AI platform. And the tooling you've built wasn't designed for this.

Three types of people now depend on your cluster

When AI workloads land on enterprise Kubernetes, they bring three very different users, each pulling the same scarce hardware in different directions:

You sit in the middle. Everyone else's productivity depends on how well you manage the GPU pool. And right now, most platform teams are managing it with YAML, spreadsheets, and a lot of Slack messages.

What actually happens inside most companies

It usually follows the same arc:

- One data science team gets access to GPUs. It works fine.

- A second team joins the cluster. You manually create a namespace and set some ResourceQuotas.

- A third team asks for A100s. You realise you have T4s and H100s on separate clusters with no shared quota context.

- A training job preempts a live inference endpoint. You find out when an SLA breaks.

- Leadership asks for a GPU utilisation report. You open a spreadsheet.

This is not a hypothetical. It's the sequence we've seen play out across teams building AI infrastructure on Kubernetes today.

The problems that emerge at scale

These aren't edge cases. They're the exact friction points that appear once more than one team shares GPU infrastructure:

No fair scheduling between teams: Vanilla Kubernetes has no native fairness mechanism for GPU workloads. The team that submits first consumes everything. Everyone else waits with no ETA, no visibility, no recourse.

Training jobs silently preempt inference: A long-running PyTorchJob can displace a live inference endpoint if priorities aren't carefully configured. You find out when users report errors, not before.

GPU idle time is invisible: A team reserves 8 A100s for a job that finishes in 2 hours but holds the nodes for 12. That's tens of thousands of dollars in GPU capacity sitting idle, with no visibility into who holds it and no mechanism to reclaim it.

Multi-cluster quotas don't exist out of the box: Your T4 cluster and your H100 cluster have no shared quota context. Teams learn to game the system, submit to whichever cluster has headroom. You lose cost visibility entirely.

Onboarding a new AI team is a 3-day ops task: Creating namespaces, configuring Kubeflow profiles, setting up Kueue ClusterQueues and LocalQueues, wiring RBAC, none of it is automated. You do it by hand every time.

Kueue helps with scheduling. Kubeflow handles jobs. KServe manages inference. But nothing ties them together with team context, quota governance, and operator visibility. That gap is what platform teams are stitching together by hand.

What platform teams actually end up doing

In the absence of purpose-built tooling, we've seen platform engineers resort to:

- Fielding GPU allocation requests over Slack and manually editing quota configs

- Tracking GPU usage across teams in a shared spreadsheet

- Writing custom scripts to reconcile Kubeflow job states with Kueue queues

- Building internal dashboards to surface utilisation that their existing tools don't expose

- Creating namespaces and Kubeflow profiles by hand each time a new team onboards

This works until it doesn't. And it stops working faster than you expect.

What we're building at Devtron

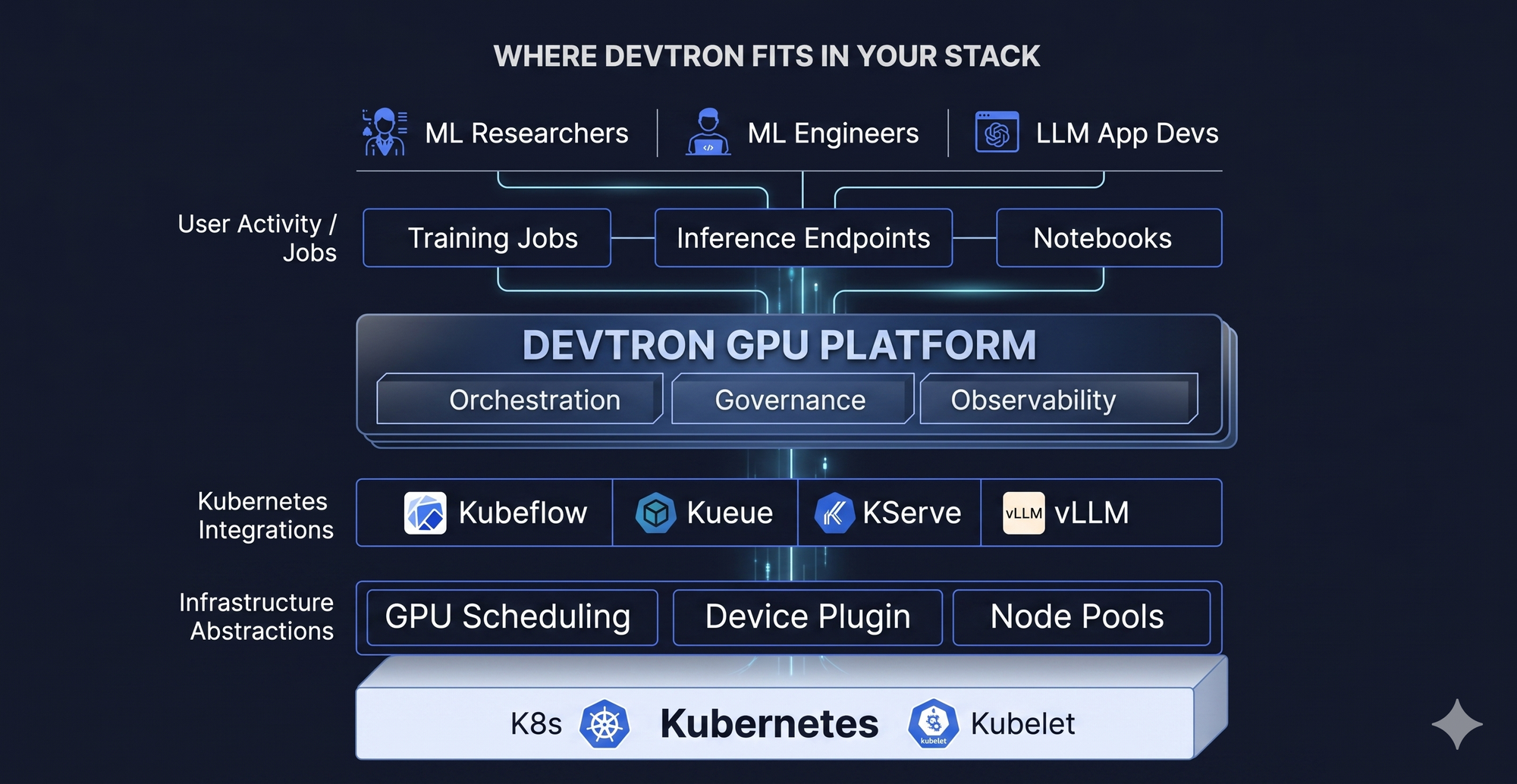

We're extending the Devtron platform to cover the full GPU workload lifecycle on Kubernetes, from notebook-based experimentation to production inference, with enterprise-grade controls layered on top.

Built on Kubeflow and Kueue under the hood. Surfaced through the Devtron experience your team already uses. No new stack to adopt.

This is not a rip-and-replace. It extends the Kubernetes, Kubeflow, and Kueue investments you've already made, and adds the team context, observability, and governance layer that's missing today.

Why we want design partners, not beta testers

We're at the prototype stage. The architecture is taking shape, and the questions we're still validating are exactly the ones you live with every day:

- How are teams actually developing on Kubernetes, notebooks first, or direct job submission?

- What does the handoff from training to inference look like in your org?

- How do you define and enforce GPU quotas across teams today — manually, or with tooling?

- What breaks first when a new AI team lands on your platform?

We're onboarding a small, deliberate group of platform and DevOps teams who are dealing with GPU orchestration on Kubernetes today. Not a waitlist. Not a feature release announcement.

Design partners get:

- Early access to the prototype before public release

- Direct input on what gets built, your workflows shape the roadmap

- A dedicated engineering contact for feedback and iteration

In return, we ask for honest feedback from your actual workflows. Not hypotheticals. Not a survey.

GPU infrastructure is becoming a core platform problem

Kubernetes was built for stateless microservices. AI workloads break almost every assumption it was designed around — long job durations, heterogeneous hardware, shared scarce resources, competing SLAs between training and inference.

Platform teams who figure out GPU orchestration now will have a meaningful advantage as AI workloads scale inside their orgs. Those who patch it together with scripts and spreadsheets will spend the next two years firefighting.

The teams who get ahead of this problem won't be the ones with the most GPUs. They'll be the ones who built the right platform around them.

We're onboarding a small group of platform teams managing GPU workloads on Kubernetes today.

Early access. Direct line to the engineering team. Real influence on the roadmap.

Apply for early access → https://devtron.ai/platform/gpu-orchestration

Or reach out directly, we want to talk before we build too much in the wrong direction.