With the rise of containers, every organization is moving from traditional monolithic workloads to containerized workloads or planning to move in the near future. With the growing number of container, it becomes really hard to manage those containers. This is where Kubernetes comes in the picture. Kubernetes is one of the most popular container orchestrator out there.

Whether beginning with Kubernetes or aiming for advanced usage, these 4 things that you should know will help you use it more productively. Acquire practical skills that can help make your workflows easier and optimize your usage of Kubernetes.

Architecture

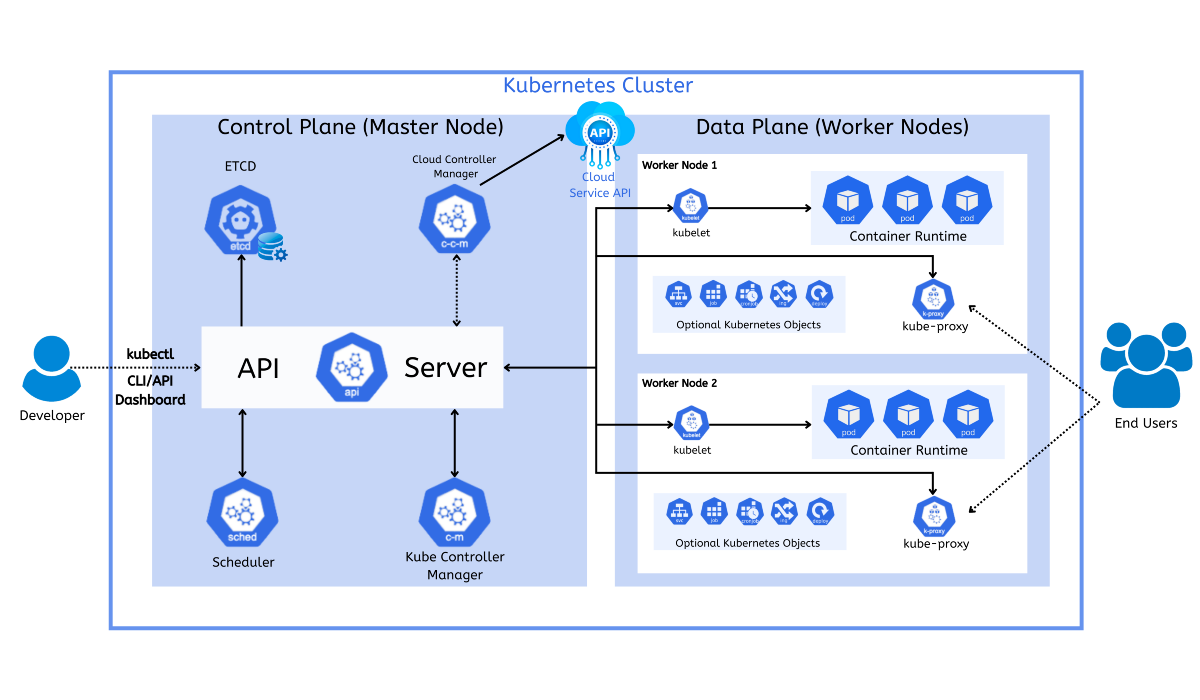

One of the basic things where you start while learning Kubernetes is its architecture. The architecture is not complicated, but it can be daunting for someone who’s just getting started. On a very high level, Kubernetes manages your workloads as multiple resources, which you feed to API server in a declarative manner.

I will try explaining the components in the above diagram one by one

Control Plane Components

API Server – API server receives requests from clients like kubectl, dashboard and then if processes the requests and persists the resource into the ETCD database. It’s the only component that can talk to ETCD database.

Controller Manager – Controller is responsible for tracking the state of resources in the cluster. It continuously tracks what’s happening in the cluster. It compares the desired state with the current state and if something is not as per the desired state then it tries to match to the state.

Scheduler – Kubernetes Scheduler is responsible for assigning pods to the nodes. The scheduler receives requests from the API server, and then it processes the request and it assigns the resource requested to the nodes based on certain parameters like request & limits or taints & tolerations etc.

ETCD - ETCD holds the current state of the cluster at any point of time.

Worker Node

On the worker node, we have these components

- Container Runtime - You need the container runtime in order to start and stop the container. The container runtime that Kubernetes supports are containerd, cri-o etc.

- Kubelet - Kubelet is responsible for communication between apiserver & nodes. On the other hand, it also interacts with the container runtime to run containers on the node.

- Kube-Proxy - Kube-proxy runs on every node and maintains the networking related configuration on the respective node.

Resources

Kubernetes manages your workloads in terms of resources and there are many resources that come with Kubernetes as a part of default installation, but this is extensible as well which we will talk in the immediate next section. The basic unit that Kubernetes manages is the pods. Pods encapsulate one or more containers inside them. One of the main feature of Kubernetes resources is that it has self-healing features. The controller keeps checking and when the resource is not running then it tries to run the resource again and if not then it kills the existing resource and start a new one. The resource in this case can be pod, service, configmaps, secrets, etc.

Extensibility

Kubernetes is a pretty extensible system and there are multiple extension points. Few of the extensions are

- Container Network Interface (CNI) - You can choose any networking implementation of your choice. You can use CNI of your choice. Some popular CNI options are cilium, flannel, calico etc.

- Container Storage Interface (CSI) - If you’re running stateful workloads then you can choose any CSI of your choice.

- Kubectl Plugins - You can extend your kubectl client and configure it to use plugins. You can read more about it here.

Now, after learning about Kubernetes basic resources that ships with Kubernetes, you may want to manage all your existing resources that don’t ship with Kubernetes. You can extend Kubernetes by creating custom resource, custom resource definitions along with controller that will act like native Kubernetes object and will manage your resources in the same manner.

There are other community projects like KubeVirt which manages your VM as Kubernetes native resources. Crossplane is another project which manages a bunch of resources in the Kubernetes native fashion. You can use crossplane to manage cloud specific resources as well, like ec2 instances, storage options and other similar resources.

Applications

In most of the organizations, you’re not going to run standalone resources. It’s going to be a combination of Kubernetes resources, and we will call this entity as application here. To manage your application, the most matured solution out there is helm. Helm acts as a package manager for Kubernetes and it simplifies the process of application deployment. For e.g. Say your applications consists of a database, some secrets and few deployments. Instead of deploying those resources one by one, you can use helm to package those applications and next time onwards you just to have run helm install with your release name. So, the lesson for you is to package your application using helm and then deploy it. Packaging your application also makes it distributable. But there are certain challenges with helm as well. For details about the challenges, please read this well expained blog.

To solve all those challenges, Devtron team has come up with their helm module which entirely focus on the management of application lifecycle deployed using helm chart. With Devtron, you can go one step further and install your helm applications with much more visibility of your resources. You can check your application logs, manifests, deployment history, watch the health of your workloads, check the diff of your deployments, easy rollback, etc. Devtron provides a seamless debugging experience for your applications and all that in a single pane dashboard.

You can learn more about Devtron here. Feel free to join our Discord community and share your experiences or doubts.

FAQ

What are the key components of Kubernetes architecture?

Kubernetes architecture includes a control plane and worker nodes. The control plane has the API server, controller manager, scheduler, and etcd, which manage cluster state and workloads. Worker nodes run the containers via components like Kubelet, container runtime (like containerd), and Kube-proxy for networking.

How does Kubernetes manage resources like containers and pods?

Kubernetes manages workloads as declarative resources such as pods, services, ConfigMaps, and Secrets. It ensures self-healing by continuously comparing the actual state to the desired state and restarting failed resources automatically through controllers.

What makes Kubernetes highly extensible?

Kubernetes is extensible through interfaces like CNI (for networking), CSI (for storage), and kubectl plugins. Users can also create Custom Resource Definitions (CRDs) and controllers to add new resource types that behave like native Kubernetes objects, enabling broader integrations with cloud and infrastructure tools.

How does Devtron simplify Kubernetes application management?

Devtron enhances Helm-based application deployment with a visual dashboard that offers insights into application logs, manifests, deployment history, resource health, and rollback options. It simplifies debugging and provides a seamless lifecycle management experience for Kubernetes apps.